Microservices Best Practices in 2026: The Architecture Guide Your Team Actually Needs

Microservices promised your team independence. Each service ships on its own schedule, scales on its own terms, and fails without taking the entire system down. That's the pitch. The reality is a bit messier, though, usually delivered in the form of duplicated data, tangled dependencies, and architecture decisions that nobody remembers making.

The problem isn't the pattern itself. It's that most development teams focus on the tactical best practices (separate your databases, add an API gateway, containerize everything) and skip the harder question: how do you govern architecture decisions across dozens of separate services and multiple teams? AI has made writing code faster than ever, but that speed amplifies the cost of bad service architecture. One wrong decomposition decision now propagates across services in hours, not months.

This guide covers the practices that actually matter for running microservices in production, including the one most guides leave out: architectural governance at scale.

What Are Microservices? (And Why Best Practices Matter)

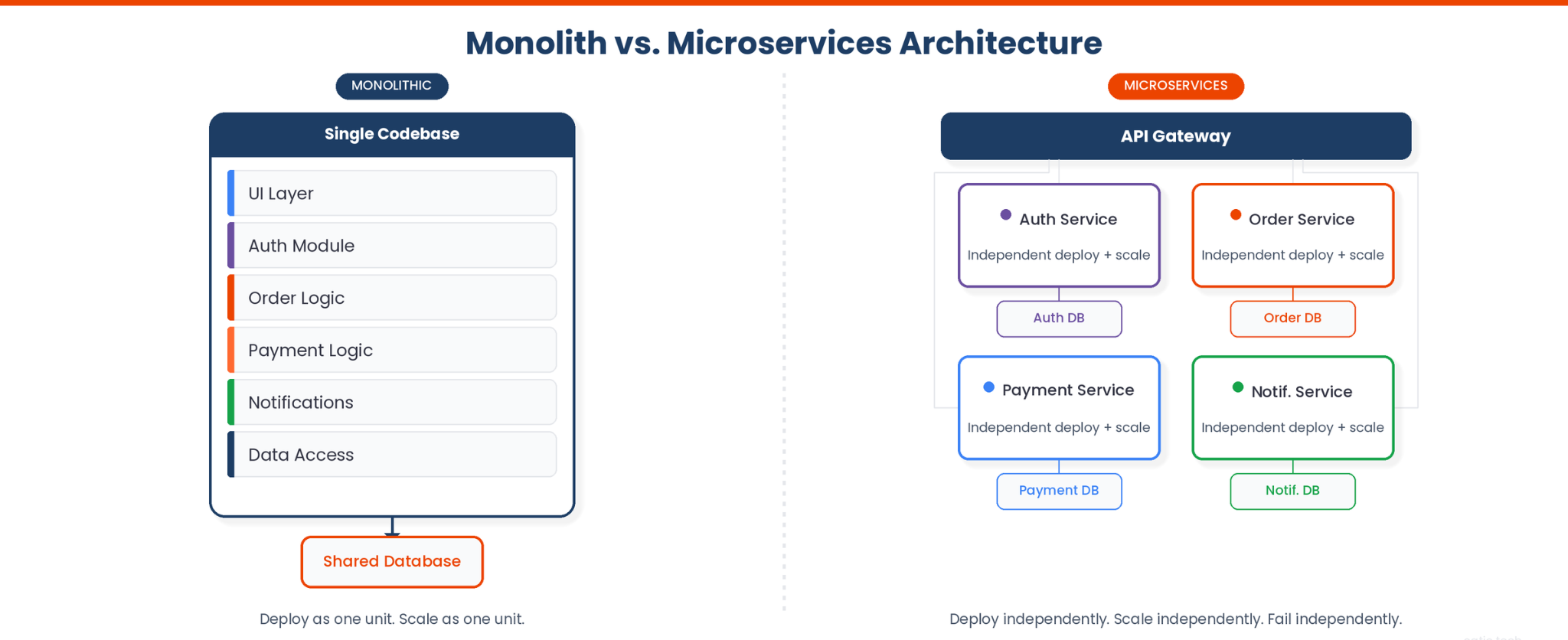

A microservices architecture consists of small, independent services, each responsible for specific business capabilities. Unlike a monolith, where all business functionality lives in a single codebase and deploys as one unit, microservices communicate over network protocols and services typically use HTTP/REST, gRPC with protocol buffers, or async messaging. Each service can be built, deployed, and scaled independently.

The pattern gained traction because monoliths hit scaling walls. When a single codebase has 200 contributors, merge conflicts become a full-time job. When one service's traffic spike requires scaling the entire application, infrastructure costs balloon. Martin Fowler and James Lewis formalized the concept in their 2014 article on microservices, and it became the dominant architecture pattern for companies scaling beyond a handful of development teams.

But here's what those early advocates didn't emphasize enough: microservices multiply the number of architecture decisions your organization makes. Every new service introduces questions about data ownership, communication patterns, deployment strategy, and failure handling. Without a system for making and tracking those decisions, teams end up with a distributed monolith, the worst of both worlds. Tools like Catio's Stacks exist because this problem became acute: teams need a live model of their system to even understand what they've built before they can decide what to change.

Design Each Service Around a Single Business Domain

Apply Domain-Driven Design (DDD) to Define Service Boundaries

The most common microservices failure isn't a technical one. It's drawing the service boundaries incorrectly. Teams that decompose by technical layer (a "database service," an "auth service," a "notifications service") end up with services that can't do anything useful without first calling three other dependent services.

Domain-Driven Design gives you a better heuristic: each service should own a bounded context. A bounded context is a boundary within which particular business rules and domain models apply. In an e-commerce system, "Order Management" is a bounded context. It owns the concept of an order, knows what states an order can be in, and handles all order-related business logic. It doesn't need to know the implementation details of how the payment processor works internally. It just needs to know whether the payment succeeded or failed.

A useful warning sign is if a single service can't handle a business request end-to-end without synchronously calling another service; that's worth examining. If your "Order Service" can't create an order without a real-time call to the "Inventory Service," that's a signal of tight coupling at the service boundaries. Inter-service collaboration is expected, but synchronous dependencies for core operations suggest you should either merge the services or redesign the service interactions to be asynchronous.

Keep Services Loosely Coupled and Highly Cohesive

Loose coupling means that changing one service doesn't require changing other services. High cohesion means everything inside a service relates to the same business functionality. These two properties reinforce each other: a well-bounded service naturally has high cohesion (everything inside it serves one domain) and low coupling (it communicates with other services only through well-defined contracts), following the single responsibility principle at the service level.

The distributed monolith anti-pattern is what happens when you skip this. You've split your code into separate repositories and deployment pipelines, but the services still share the same database, deploy in lockstep, and break when one goes down. You've added the operational complexity of a distributed system without any of the benefits. When teams model their service architecture in a tool like Catio, these hidden dependencies become visible since you can see that Service A calls Service B, which calls Service C synchronously, forming a chain of dependent services that fails as a unit.

Separate Data Storage Per Service

Each microservice should own its data store. That means a separate data store, whether a dedicated database, schema, or table namespace, that no other service reads from or writes to directly. This is the database-per-service pattern, and it's the strongest lever you have for achieving genuine independence in your data management strategy.

Shared databases are a persistent source of hidden coupling in microservices architectures. When multiple services share the same table, a schema change in one service breaks the other. When one service writes data that another service reads directly, you've created an implicit contract that no API versioning strategy can protect, putting data integrity at risk.

How do you handle cross-service data needs? Three approaches, depending on the use case:

- Event-driven synchronization. Service A publishes a "OrderCreated" event. Service B consumes it and stores a local copy of the data it needs. Each service stays eventually consistent without direct database access to the same database.

- API calls. Service B requests data from Service A's API when it needs it. This adds latency and a runtime dependency, so reserve it for cases where stale data is unacceptable.

- CQRS (Command Query Responsibility Segregation). Separate the write model (commands go to the owning service) from the read model (a dedicated read store aggregates data for queries that span multiple services and domains).

The right choice depends on your consistency requirements, latency tolerance, and operational maturity. There's no universal answer, which is exactly why architecture decisions in microservices need structured reasoning rather than tribal knowledge passed between teams.

Design Resilient APIs and Communication Patterns

Use API Gateways for External Traffic

An API gateway sits between your clients and your backend services, handling advanced routing, load balancing, authentication, rate limiting, and protocol translation. Without a gateway or equivalent edge layer (a BFF, an edge router, or a service mesh ingress), every client needs to know the address of every service, and every internal service needs to implement its own authentication logic.

The API gateway is also where you enforce contract versioning. When one service's API changes, the gateway can translate requests from clients still using the old format. This buys you time to migrate consumers without a coordinated big-bang release.

Choose the Right Inter-Service Communication (Sync vs. Async)

Synchronous communication (REST, gRPC) is simpler to reason about but creates runtime coupling. If one service calls another synchronously and that service is down, both fail. For request-reply patterns where the caller needs an immediate response, synchronous works. For everything else, consider asynchronous communication.

Event-driven communication (message queues, event streams) decouples services in time. Service A publishes a message and moves on. Service B processes it when it can. This is more resilient, but it introduces complex interactions around message ordering, idempotency, and eventual consistency.

The choice isn't binary. Most production architectures use both synchronous approaches for user-facing request paths where latency matters and asynchronous approaches for backend services handling background processing, data synchronization, and event-driven workflows.

Implement Circuit Breakers and Retry Logic

When a downstream service starts failing, hammering it with retries makes the problem worse. A circuit breaker detects failure patterns (e.g., 5 consecutive timeouts) and "opens" the circuit, immediately returning an error or fallback response instead of making the call. After a cooldown period, it allows a test request through. If that succeeds, the circuit closes and normal traffic resumes.

Combine circuit breakers with exponential backoff for retries, which generally means waiting 1 second, then 2, then 4, then 8. Add jitter (random variation) so that multiple service instances don't all retry at exactly the same moment. Without jitter, a service recovering from an outage gets hit by a synchronized retry storm that takes it right back down.

Implement Centralized Observability From Day One

Distributed Tracing, Metrics, and Centralized Logging

Debugging a microservices architecture by reading individual service logs doesn't scale. A single user request might touch five services, and when something goes wrong, you need to trace that access request across all five to find where it broke.

Distributed tracing (OpenTelemetry is the leading open standard) assigns a trace ID to each incoming request and propagates it across service boundaries. Every service logs that trace ID, so you can reconstruct the full request path after the fact. Without this, debugging a latency spike in a distributed environment becomes a multi-hour detective hunt through disconnected log files.

Centralized logging aggregates logs from all services into a single searchable store. Structured logging (JSON format with consistent fields like service_name, trace_id, request_id, duration_ms) makes this searchable. Unstructured log lines ("Error: something went wrong") are almost useless at scale.

Metrics (request rate, error rate, and latency percentiles) give you the dashboard view for monitoring system health. Traces give you the deep-dive capability. You need both. Architecture visibility across your entire tech stack adds a third dimension, allowing you to understand which services exist, how they connect, and what infrastructure they run on. You can't observe what you can't see.

Health Checks and Service-Level Objectives (SLOs)

Every service should expose a health endpoint that reports its current status. Kubernetes uses these for liveness and readiness probes. Then, if a service instance that fails its liveness check gets restarted, a service instance that fails its readiness check stops receiving traffic.

SLOs (Service-Level Objectives) set explicit targets for reliability. "The order service will respond to 99.9% of requests within 200ms." This gives cross-functional teams a shared definition of "healthy" and a threshold for when to stop shipping features and focus on reliability. Without SLOs, reliability is subjective, and arguments about "good enough" become political instead of data-driven.

Secure Your Microservices Architecture

Authentication and Authorization Across Services

In a monolith, authentication happens once at the perimeter. In microservices, every service-to-service call introduces security risks. A service mesh with mutual TLS (mTLS) provides network security by encrypting traffic between internal services and verifying that both ends are who they claim to be. This prevents an attacker who compromises your internal network from impersonating a service.

For user-facing authorization, issue a JWT (JSON Web Token) at the API gateway and pass it through the service chain. Each service validates the token and enforces authorization, whether through role-based access control, scope-based claims, or a policy engine, against its own domain. Each service should own the authorization decisions for the resources it manages, though how you distribute that logic (embedded checks, a centralized policy service, or a hybrid) depends on your team's needs.

Zero Trust and Network Policies

Zero trust means no service trusts another just because they share a network. To secure microservices, every access request is authenticated and authorized, regardless of origin. Kubernetes NetworkPolicies let you define which services communicate with each other, enforcing the principle of least privilege for microservices security at the network level.

Secrets management (HashiCorp Vault, AWS Secrets Manager, or your cloud provider's native solution) avoids hardcoding credentials in config files. Be deliberate about whether secrets are injected as mounted files, environment variables, or fetched at runtime from an external provider. Rotate secrets automatically regardless of the injection method. If a service's credentials are compromised, a short rotation interval limits the blast radius.

Containerize and Automate Deployment

Use Containers and Orchestration (Kubernetes)

Containers are the natural deployment unit for microservices because they package each service with all its dependencies into a portable artifact. Your order service runs the same way on a developer's laptop, in staging, and in production.

Although full-blown orchestration isn’t always required for smaller architectures, if you do need it, Kubernetes handles the operational complexity of scheduling containers across nodes, restarting failed instances, running multiple instances for load balancing, and rolling out updates without downtime. If you're running more than a handful of services, container orchestration becomes effectively necessary. The operational overhead of managing and deploying services manually across environments grows faster than your team.

CI/CD Pipelines for Independent Service Deployment

Independent deployment is the whole point of microservices. If you can't deploy services independently, deploying Service A without also deploying Service B, you've lost the primary benefit of the architecture. Each service needs its own continuous integration and continuous deployment pipeline with automated testing that builds, tests, and deploys independently.

Feature flags let you deploy code without exposing it to users. Deploy the code on Monday, enable the feature for 5% of users on Wednesday, and roll it out to everyone on Friday. If something breaks, disable the flag without a rollback. This decouples the entire release process from business decisions, and it's especially valuable in microservices development where coordinating deployments across dependent services is expensive.

Govern Your Architecture as It Scales

Every practice in this guide works when you have 5 simple services and one team. The real challenge starts at 20 services across four cross-functional teams, and becomes critical at 100+ services across a dozen teams. At that scale, architecture decisions fragment. The service team building the payment service doesn't know what patterns the service team building the notification service chose, or why. Tribal knowledge replaces documentation. Standards drift.

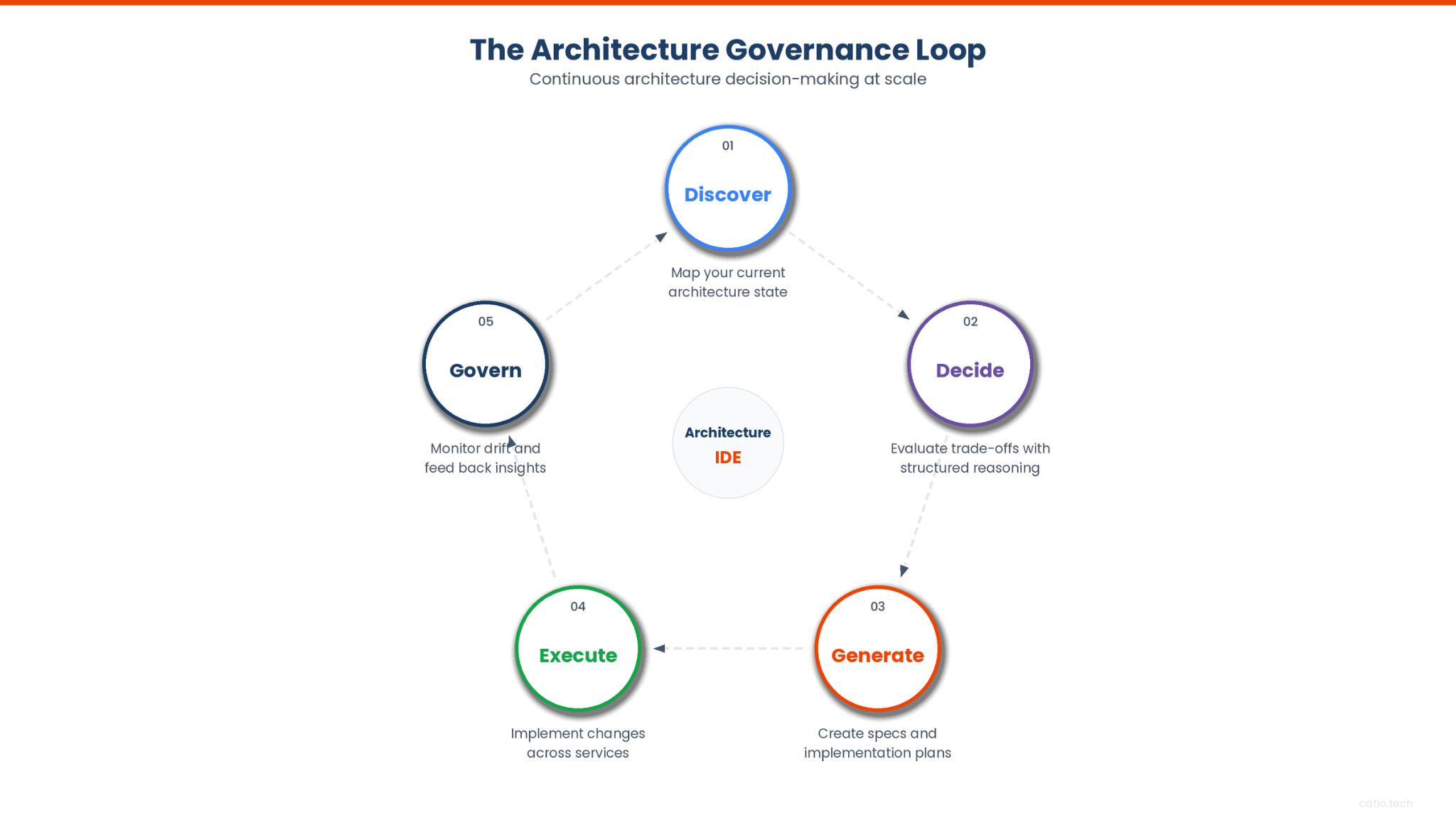

Architecture governance is the practice most "microservices best practices" lists skip, but it's what determines whether the other nine practices hold up over time.

Governance doesn't mean a review board that rubber-stamps decisions. It means having a system for making architecture decisions visible, reasoned, and traceable. Specifically:

- Visibility. Can you see your entire microservices estate, all services, their dependencies, their infrastructure, their APIs, in one place? Not in a Confluence page someone updated six months ago. A live model that stays current as your system changes.

- Reasoning. When a team proposes decomposing a monolith into microservices, can they explore the trade-offs in a structured way? Cost, risk, timeline, operational complexity? AI-powered architecture reasoning lets teams ask questions like "What are the trade-offs of splitting this service into three?" and get answers grounded in their actual system, not generic advice.

- Decisions with data. When evaluating a modernization pathway, does the team see real numbers (cost projections, risk assessments, estimated timelines) or just opinions? Strategic recommendations with explicit trade-offs replace gut feelings with data-backed options.

The Discover, Decide, Generate, Execute, Govern loop is how this works in practice. You discover your current architecture state. You decide on changes using structured reasoning. You generate specs and implementation plans. You execute them. And you govern the results, feeding observations back into the next cycle. Without this loop, architecture decisions become one-way doors that nobody revisits until something breaks.

Common Microservices Anti-Patterns to Avoid

The distributed monolith. You've split your code into separate services, but they share a database, deploy together, and break together, making them dependent services in everything but name. You have all the operational complexity of microservices with none of the benefits. The fix here is to enforce database-per-service and eliminate synchronous call chains.

Nano-services. Services so small they can't do anything useful alone. If every business operation requires orchestrating five services, you've over-decomposed. Each service should handle a meaningful business capability, not a single function. When in doubt, start with larger services and split later. It's much easier to divide a well-understood service than to merge poorly-understood ones.

The synchronous chain. Service A calls B, which calls C, which calls D. Latency compounds. If any link fails, the entire system fails along the chain. Introduce asynchronous messaging for non-critical paths and keep synchronous dependency chains as short as possible.

Ignoring data consistency. Database-per-service means cross-service workflows push toward eventual consistency, because distributed ACID transactions are costly and typically avoided. Teams that ignore distributed transaction management and pretend their distributed system still has strong consistency across services end up with data integrity issues and race conditions. Design for eventual consistency explicitly. Use saga patterns for transaction management across multiple services.

The architecture amnesia problem. Teams make decisions, implement them, and move on. Six months later, nobody remembers why the notification service uses Kafka while the payment service uses RabbitMQ. Was that intentional? Was there a trade-off analysis? Without a system for recording and revisiting architecture decisions, every choice becomes an accident of history. This is where tools like Catio's Recommendations add value: they document the reasoning behind decisions, not just the outcomes.

Build Your Architecture Decision System

The tactical practices in this guide, from service boundaries to observability to security, are necessary. But they're not sufficient. Every team that's struggled with microservices at scale will tell you the same thing: the technical patterns in software development are the easy part. The hard part is coordinating architecture decisions across teams, keeping your mental model of the system accurate, and ensuring that today's decisions don't become tomorrow's technical debt.

That's an architecture decision problem, not a coding problem. And it's why the most important practice isn't on most lists: having a system for reasoning about your architecture as it evolves.

If you're managing a growing microservices estate and want to see how structured architecture reasoning works in practice, book a demo to see how Catio helps teams make better decisions, from initial decomposition to ongoing governance.

FAQ: Microservices Best Practices

What is the role of an API gateway in microservices?

An API gateway acts as the single entry point for all client requests to your microservices. It handles advanced routing (directing requests to the right service instance), cross-cutting concerns (authentication, access control, rate limiting, request logging), and protocol translation (converting external REST requests to internal gRPC calls, for example). Without one, every client must know every service's address, and authentication logic gets duplicated across services.

How do you handle data consistency in microservices?

You design for eventual consistency rather than trying to maintain strong consistency across services. The saga pattern coordinates transactions that span multiple services: each service performs its local transaction and publishes an event. If a downstream step fails, compensating transactions undo the previous steps. For read-heavy workloads that need data from multiple services, the CQRS pattern maintains a separate read model that aggregates data from event streams, keeping each service's own data store as the source of truth.

When should you not use microservices?

Don't start with microservices. Start with a well-structured monolith and decompose when you hit a specific scaling or organizational pain point. If your team is small (fewer than 10 engineers), a monolith is almost always simpler to build, deploy, and debug. If your system doesn't need different components to scale independently, microservices add operational overhead to your software development process without a payoff. The overhead of a distributed environment (network latency, partial failures, data consistency) is real, and it's only worth accepting when the benefits (independent deployment, team autonomy, targeted scaling) outweigh the costs.

How do you structure a successful microservices architecture?

Structure services around business domains, not technical layers. Use Domain-Driven Design to establish clear service boundaries through bounded contexts. Each service consists of its own data management layer, exposes a well-defined API, and supports independent deployment. Invest in observability (distributed tracing, metrics, centralized logging) from day one, not as an afterthought. And build architecture governance into your process so that decisions are visible, reasoned, and revisitable as your system grows.